Agentic AI Governance Gap: Why 79 Percent Deploy But Only 21 Percent Are Ready

This week AWS announced AWS Agent Registry in preview. Agents get something like a digital ID card: a stable identity, trackable permissions, an audit trail. That is the right feature at the right time - because the numbers show the market has reached a point where governance is no longer optional.

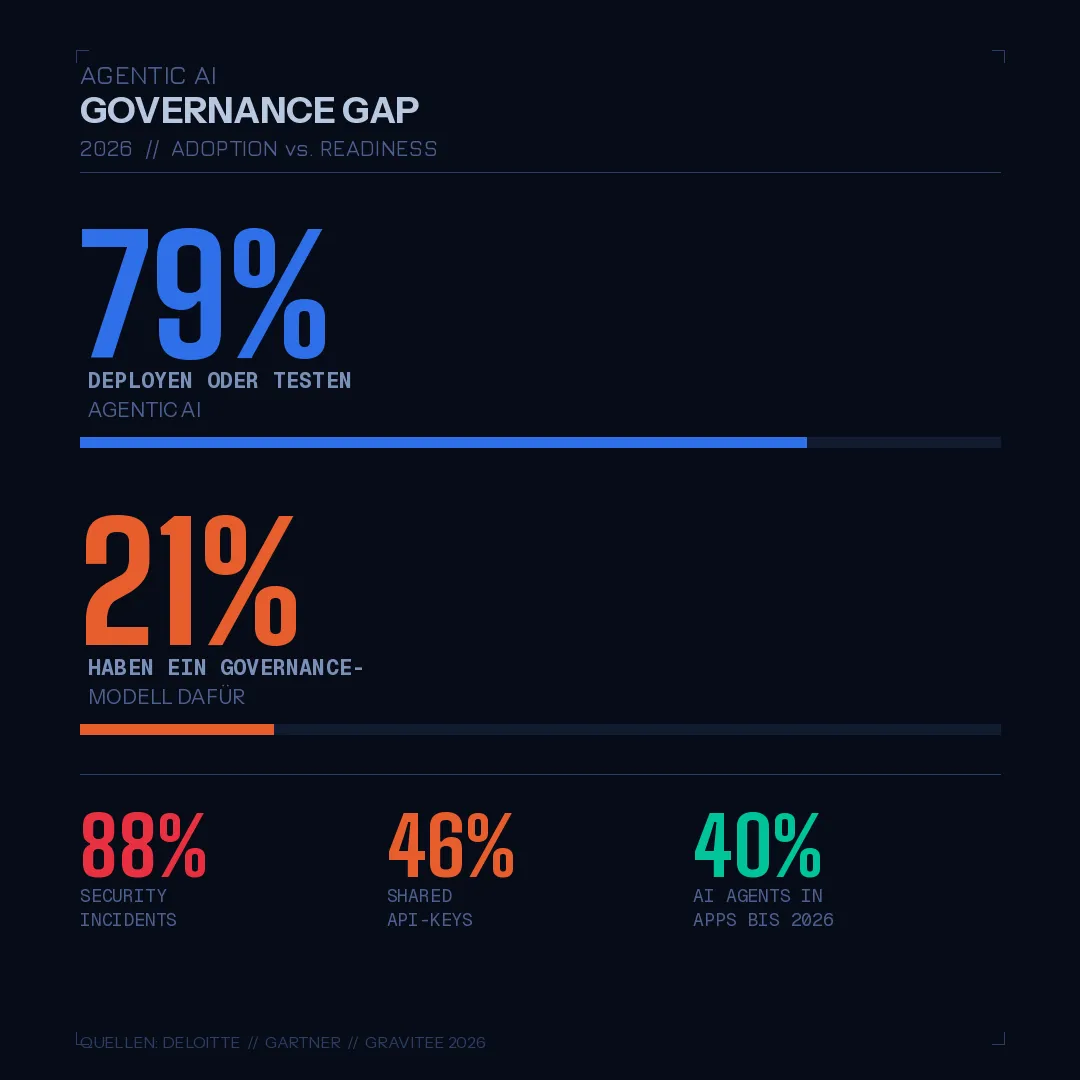

Deloitte has measured: 79 percent of organisations are deploying or testing Agentic AI. Only 21 percent have a mature governance model for it. At the same time, Gravitee found that 88 percent of companies using AI agents have already had security incidents.

I have seen this pattern twice before.

The Pattern Is Not New

First with Shadow IT: business units procured cloud services and SaaS tools without IT knowing. It took about ten years before identity governance became standard in German enterprises - before IAM solutions, recertification cycles, and access reviews became mandatory.

Then with cloud adoption itself: AWS accounts were created without governance frameworks, without landing zones, without Control Tower. The standard sequence was: three to five accounts without guardrails, then a security incident or a C5 audit, then the governance project. Cleaning up retroactively costs three times as much.

With Agentic AI the same thing is happening - just faster. Gartner projects that by end of 2026, forty percent of all enterprise applications will have AI agents built in. That is not five years away. That is eight months. The proliferation rate is higher than Shadow IT or cloud, and the blast radius is larger: agents act autonomously.

What "Governance Gap" Means in Practice

An agent without governance is not just unruly. It is an autonomous actor making API calls, reading data, taking decisions - and for which no one in the organisation can say who it is, what it is allowed to do, and what it did in the last 30 days.

That sounds abstract. Here it is concrete: in a BSI C5:2026 audit you already need to demonstrate traceability for AI systems today. The new requirements for AI system traceability are not a future project. Anyone who realises that only at the next audit has an expensive remediation problem.

The three questions every CISO team must answer before the next agent deployment:

Question 1: Identity

Who is this agent? What can it do? How is it identified in audit logs?

That sounds like a basic IT question. It is. And yet in DACH client conversations I rarely hear a clear answer. Agents are deployed like batch jobs: a service account, a few permissions, done. No name, no owner, no lifecycle.

The AWS Agent Registry changes that: agents get a managed identity with permissions tracking and an audit trail. That is the feature I have been building manually in client projects until now - through IAM tagging conventions, CloudTrail filters, and a bit of Terraform discipline. Good that AWS is now offering this as a first-class feature.

The identity question must be answered before an agent goes to production - not after the first incident.

Question 2: Permissions

What is this agent allowed to do, in which context, with which data?

IAM policies for agents follow the same principles as for people: least privilege, reviewed regularly, scoped to the task. The knowledge exists. The practice lags behind.

What I see in projects: agents receive permissions that fit the initial development phase - too broad, too permanent, not scoped to the production context. That is the same anti-pattern as EC2 instance roles with * permissions. The consequence is not "agent does something malicious". The consequence is: when something goes wrong, the attacker or the malfunctioning agent has more room to operate than necessary.

Concrete steps: agents get their own IAM service account, separate from the surrounding system's service account. Scoped policies, conditions on aws:RequestedRegion and aws:SourceVpc where appropriate. Review cycle at least quarterly.

Question 3: Audit Trail

What did this agent do, when, on whose behalf?

CloudTrail logs every API call. Security Lake aggregates across accounts. Anyone on AWS has the technical means for a complete audit trail - but only if agents are properly identified. An agent running under a generic service account appears in CloudTrail as an undifferentiated stream of API calls. An agent with a managed identity from the Agent Registry appears as an auditable unit.

BSI C5:2026 takes this seriously. The requirements for traceability of AI systems are not optional. Anyone without a traceability-capable audit trail for their agents will receive a finding at the next audit.

What Good Governance Looks Like

I am not describing this as a target state for 2028. This is the minimum for today.

Agent inventory: Not a spreadsheet. A managed registry with identities and permissions. The AWS Agent Registry is a good starting point. Anyone not yet standardised on Bedrock builds the equivalent with IAM tagging and a central account-level inventory.

Human accountability: Every agent has a named owner. That person is responsible for the agent's behaviour - not the platform, not the tool, not "the AI". When an incident response call asks "Who is responsible for agent X?", there must be an answer.

Review cycle: Permissions, scope, and behaviour are reviewed at least quarterly. This is not extra work if governance is built in from the start. It is significant extra work if you build it retroactively.

C5/NIS2 integration: Agent governance is not a parallel discipline to existing compliance. It is part of it. BSI C5:2026 has explicit requirements for AI system traceability. NIS2 includes risk management requirements that cover agents as components of critical processes. The compliance programme must cover agents, not work around them.

What the AWS Agent Registry Signals

Today's announcement is a signal, not just a feature. AWS is building a platform for Agentic AI where identity, runtime, observability, and - as I expect - further governance components grow together as a coherent stack. That is the same pattern AWS used to make Lambda the default runtime: platform first, ecosystem second.

For DACH enterprises this means: anyone standardising on AWS Bedrock and Agent Registry today is building on a stack that considers governance from the start. Anyone building their own agents while ignoring the Registry is accumulating technical debt that will be expensive later - not because the tooling is missing, but because the inventory is missing.

The pattern is familiar. The timeline is tighter than with Shadow IT and cloud. The question is when your own house treats governance as a prerequisite - not as remediation.

I work on governance frameworks for Agentic AI in TM Forum Catalyst projects and DACH client engagements. Anyone with specific questions about Agent Registry, IAM policies for agents, or C5:2026 mapping: comment below or connect directly.